插件

話說Hadoop 1.0.2/src/contrib/eclipse-plugin只有插件的源代碼,這里給出一個我打包好的對應的Eclipse插件:

下載地址

下載后扔到eclipse/dropins目錄下即可,當然eclipse/plugins也是可以的,前者更為輕便,推薦;重啟Eclipse,即可在透視圖(Perspective)中看到Map/Reduce。

配置

點擊藍色的小象圖標,新建一個Hadoop連接:

注意,一定要填寫正確,修改了某些端口,以及默認運行的用戶名等

具體的設置,可見

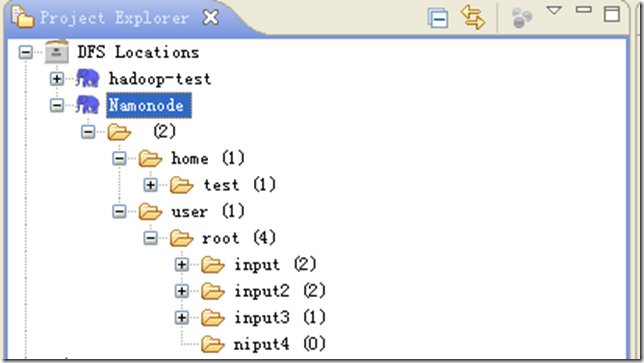

正常情況下,可以在項目區域可以看到

這樣可以正常的進行HDFS分布式文件系統的管理:上傳,刪除等操作。

為下面測試做準備,需要先建了一個目錄 user/root/input2,然后上傳兩個txt文件到此目錄:

intput1.txt 對應內容:Hello Hadoop Goodbye Hadoop

intput2.txt 對應內容:Hello World Bye World

HDFS的準備工作好了,下面可以開始測試了。

Hadoop工程

新建一個Map/Reduce Project工程,設定好本地的hadoop目錄

新建一個測試類WordCountTest:

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 |

|

右鍵,選擇“Run Configurations”,彈出窗口,點擊“Arguments”選項卡,在“Program argumetns”處預先輸入參數:

hdfs://master:9000/user/root/input2 dfs://master:9000/user/root/output2

備注:參數為了在本地調試使用,而非真實環境。

然后,點擊“Apply”,然后“Close”。現在可以右鍵,選擇“Run on Hadoop”,運行。

但此時會出現類似異常信息:

12/04/24 15:32:44 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

12/04/24 15:32:44 ERROR security.UserGroupInformation: PriviledgedActionException as:Administrator cause:java.io.IOException: Failed to set permissions of path: \tmp\hadoop-Administrator\mapred\staging\Administrator-519341271\.staging to 0700

Exception in thread "main" java.io.IOException: Failed to set permissions of path: \tmp\hadoop-Administrator\mapred\staging\Administrator-519341271\.staging to 0700

at org.apache.hadoop.fs.FileUtil.checkReturnValue(FileUtil.java:682)

at org.apache.hadoop.fs.FileUtil.setPermission(FileUtil.java:655)

at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:509)

at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:344)

at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:189)

at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:116)

at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:856)

at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:850)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1093)

at org.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:850)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:500)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:530)

at com.hadoop.learn.test.WordCountTest.main(WordCountTest.java:85)

這個是Windows下文件權限問題,在Linux下可以正常運行,不存在這樣的問題。

解決方法是,修改/hadoop-1.0.2/src/core/org/apache/hadoop/fs/FileUtil.java里面的checkReturnValue,注釋掉即可(有些粗暴,在Window下,可以不用檢查):

| 1 2 3 4 5 6 7 8 9 10 11 12 13 |

|

重新編譯打包hadoop-core-1.0.2.jar,替換掉hadoop-1.0.2根目錄下的hadoop-core-1.0.2.jar即可。

這里提供一份修改版的hadoop-core-1.0.2-modified.jar文件,替換原hadoop-core-1.0.2.jar即可。

替換之后,刷新項目,設置好正確的jar包依賴,現在再運行WordCountTest,即可。

成功之后,在Eclipse下刷新HDFS目錄,可以看到生成了ouput2目錄:

點擊“ part-r-00000”文件,可以看到排序結果:

Bye 1

Goodbye 1

Hadoop 2

Hello 2

World 2

嗯,一樣可以正常Debug調試該程序,設置斷點(右鍵 –> Debug As – > Java Application),即可(每次運行之前,都需要收到刪除輸出目錄)。

另外,該插件會在eclipse對應的workspace\.metadata\.plugins\org.apache.hadoop.eclipse下,自動生成jar文件,以及其他文件,包括Haoop的一些具體配置等。

嗯,更多細節,慢慢體驗吧。

遇到的異常

org.apache.hadoop.ipc.RemoteException: org.apache.hadoop.hdfs.server.namenode.SafeModeException: Cannot create directory /user/root/output2/_temporary. Name node is in safe mode.

The ratio of reported blocks 0.5000 has not reached the threshold 0.9990. Safe mode will be turned off automatically.

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirsInternal(FSNamesystem.java:2055)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:2029)

at org.apache.hadoop.hdfs.server.namenode.NameNode.mkdirs(NameNode.java:817)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:39)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:25)

at java.lang.reflect.Method.invoke(Method.java:597)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:563)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:1388)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:1384)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1093)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:1382)

在主節點處,關閉掉安全模式:

#bin/hadoop dfsadmin –safemode leave

如何打包

將創建的Map/Reduce項目打包成jar包,很簡單的事情,無需多言。保證jar文件的META-INF/MANIFEST.MF文件中存在Main-Class映射:

Main-Class: com.hadoop.learn.test.TestDriver

若使用到第三方jar包,那么在MANIFEST.MF中增加Class-Path好了。

另外可使用插件提供的MapReduce Driver向導,可以幫忙我們在Hadoop中運行,直接指定別名,尤其是包含多個Map/Reduce作業時,很有用。

一個MapReduce Driver只要包含一個main函數,指定別名:

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

|

這里有一個小技巧,MapReduce Driver類上面,右鍵運行,Run on Hadoop,會在Eclipse的workspace\.metadata\.plugins\org.apache.hadoop.eclipse目 錄下自動生成jar包,上傳到HDFS,或者遠程hadoop根目錄下,運行它:

# bin/hadoop jar LearnHadoop_TestDriver.java-460881982912511899.jar testcount input2 output3

OK,本文結束。